Data quality

FactSet’s vectorization service aims to improve agent accuracy

FactSet chief AI officer Kate Stepp discusses the importance of having AI-ready data in the agentic era.

Data heads scratch heads over data quality headwinds

Bank and asset manager execs say the pressure is on to build AI tools. They also say getting the data right is crucial, but not everyone appreciates that.

Data standardization key to unlocking AI’s full potential in private markets

As private markets continue to grow, fund managers are increasingly turning to AI to improve efficiency and free up time for higher-value work. Yet fragmented data remains a major obstacle.

AI strategies could be pulling money into the data office

Benchmarking: As firms formalize AI strategies, some data offices are gaining attention and budget.

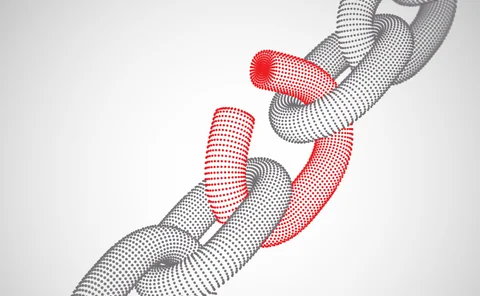

Banks hate data lineage, but regulators keep demanding it

Benchmarking: As firms automate regulatory reporting, a key BCBS 239 requirement is falling behind, raising questions about how much lineage banks really need.

AI & data enablement: A looming reality or pipe dream?

Waters Wrap: The promise of AI and agents is massive, and real-world success stories are trickling out. But Anthony notes that firms still need to be hyper-focused on getting the data foundation correct before adding layers.

Data managers worry lack of funding, staffing will hinder AI ambitions

Nearly two-thirds of respondents to WatersTechnology’s data benchmark survey rated the pressure they’re receiving from senior executives and the board as very high. But is the money flowing for talent and data management?

AI enthusiasts are running before they can walk

The IMD Wrap: As firms race to implement generative and agentic AI, having solid data foundations is crucial, but Wei-Shen wonders how many have put those foundations in.

50% of firms are using AI or ML to spot data quality issues

How does your firm stack up?

Examining how adaptive intelligence can create resilient trading ecosystems

Researchers from IBM and Wipro explore how multi-agent LLMs and multi-modal trading agents can be used to build trading ecosystems that perform better under stress.

Fixed income data continues to challenge capital markets firms

A range of challenges facing fixed income market participants

AI fails for many reasons but succeeds for few

Firms hoping to achieve ROI on their AI efforts must focus on data, partnerships, and scale—but a fundamental roadblock remains.

Buy-side transformation requires data stabilization

The IMD Wrap: Insightful and actionable data tools require reliable and accurate underlying data. Max looks at some startling findings from recent reports that highlight the key data challenges that lie ahead.

LLMs are making alternative datasets ‘fuzzy’

Waters Wrap: While large language models and generative/agentic AI offer an endless amount of opportunity, they are also exposing unforeseen risks and challenges.

CDOs must deliver short-term wins ‘that people give a crap about’

The IMD Wrap: Why bother having a CDO when so many firms replace them so often? Some say CDOs should stop focusing on perfection, and focus instead on immediate deliverables that demonstrate value to the broader business.

Bank of America reduces, reuses, and recycles tech for markets division

Voice of the CTO: When it comes to the old build, buy, or borrow debate, Ashok Krishnan and his team are increasingly leaning into repurposing tech that is tried and true.

In data expansion plans, TMX Datalinx eyes AI for private data

After buying Wall Street Horizon in 2022, the Canadian exchange group’s data arm is looking to apply a similar playbook to other niche data areas, starting with private assets.

Unleashing the power of data and technology in an age of digital transformation

How Bloomberg sees digital transformation and the strategies it employs to solve data and technology pain points for its clients.

Bond CT hopeful Etrading unveils free tape prototype ahead of tenders

The vendor hopes to provide the long-awaited consolidated tape for bonds in the EU and the UK, demonstrating its ability to do so through ETS Connect.

To unlock $40T private markets, Hamilton Lane embraced automation

In search of greater transparency and higher quality data, asset managers are taking a tech-first approach to resource gathering in an area that has major data problems.

Artificial intelligence, like a CDO, needs to learn from its mistakes

The IMD Wrap: The value of good data professionals isn’t how many things they’ve got right, says Max Bowie, but how many things they got wrong and then fixed.