Waters 25: A Look Back on the Last Two Decades

Waters examines some of the most important events in financial technology of the past 25 years.

Even—perhaps especially—in an industry where you’re only as good as your last trade, 25 years is a long time.

Few publications can claim to have been around for the quarter-century that has seen some of the greatest upheaval in history for financial-market technology, and as we celebrate our 25th anniversary this issue, Waters looks back on time of the key points along the journey.

From the rise of electronic and high-speed trading, through the development of cloud, and risk management coming of age, to now, where we are perhaps entering the age of artificial intelligence, we would like to thank our readers, past and present. We hope you will join us for the next 25 years.

The topics covered below include, in order: Regulation, the Global Financial Crisis of 2008, the electronification of markets, cloud computing, algorithmic trading, order and execution management systems, the rise of the chief data officer, cybersecurity, alternative data, risk management technology, the birth of fintech, distributed ledgers, and the terrorist attacks of September 11, 2001.

Regulation: The Shaping of an Industry

If you’re looking for a colorful discussion around the famed Stone Street establishments just adjacent to Wall Street, pull up a chair, or perhaps stand on a barstool, and proclaim in a high whisper that regulation is the greatest financial-services influence of the last quarter-century. Brows will furrow. And yet, even the most hardened trader will concede: it’s true.

Regulation’s influence on financial technology, and indeed on Waters, is profound. Consider that the Glass-Steagall Act was 60 years old and still law when the magazine was founded. After its repeal in 1999, the Commodity Futures Modernization Act calamitously declined to regulate swaps in 2000. Dodd-Frank and its roughly 2,300 pages saw to that, opening entirely new fronts for innovation. Meanwhile, Reg NMS laid out the groundwork for market data as we know it today. Equities dark pools, authorized by Reg ATS, brought a new wrinkle to electronic trading—though venue proliferation, a seemingly healthy mark of competition, has led to liquidity fragmentation and frustration. Regulators have taken on big, spirited projects of their own, like the Consolidated Audit Trail, at times with half-hearted regret. And reporting only gets more ambitious: Europe has taken that baton today with the far-reaching implications of the revised Markets in Financial Instruments Directive.

From the very beginning, regulation has proven a boon to fintech. By breaking down Glass-Steagall barriers, regulators gave a push to banks in the United States, prompting their transformation into technology powerhouses. It grew capabilities, amplified balance sheets and some of the largest vendors in finance today share in that heritage. Of course, where only the leverage went and not the transparency, global markets froze up in 2008 in a way that would have seemed unimaginable 25 years earlier. Almost as suddenly, every tech provider—and even tech reporter—became a regulation expert, and remains so today.

Even in quieter, more peaceful times well off the front page of the Financial Times, rulemaking choices have built up, broken down, and re-engineered markets with consequences for liquidity, no small amount of controversy, and fresh openings for fast-moving tech. Today, regulatory standards increasingly shape how firms share, govern, commoditize and even “forget” their data. And in the post-crisis era—one marked by constrained capital, Adoboli-esque fraudsters, benchmark rate scandals, and billion-dollar fines—regulators now use tech to drive their own enforcement actions, as well.

“With technology, today there are many examples today of a regulator and industry working together,” says Tom Sporkin, partner at law firm BuckleySandler and a former senior official the US Securities and Exchange Commission. “For illustration, look at the Consolidated Audit Trail (CAT) project, overhauling the antiquated order capture system that has been part of the fabric of the financial markets for decades. While we know the CAT will revolutionize the future of market surveillance, informed SEC rule writing, and insider trading investigations, the more interesting aspect may be the advancements the industry makes with access to order data infinitely more complex than ever imagined.”

Indeed, technology’s relationship with regulation is innately dualistic, and never more so than today. It remains a great solver of regulation-driven problems. From small to big, hundreds of applications from electronic crossing networks and swap execution facility aggregators to artificial intelligence know-your-customer checks and real-time trade reporting engines have grown this way. Regulation couldn’t exist without the technology to deal with it. But regulation and fintech find themselves increasingly in a standoff, too. Banks question why tech startups—their potential disruptors, that is—are not regulated or viewed as systemically important. Regulators are sniffing around execution and the mysteries that may lie underneath. Meanwhile, not a C-level conference panel passes by without discussion of lost recruitment to Silicon Valley. Why? Too much regulation. For those reasons, they will continue to be bound together—if not always as happily as in the past—for years to come.

The Crisis: A Formative Moment

Waters has seen hellfire and, with a heavy heart, lived through it. No new member of the newsroom lasts long before learning of the publication’s tragic and extremely personal involvement in the September 11th, 2001 attacks in New York. Still, arguably the most formative journalistic event to pass through Waters’ pages came several years later, in the subprime mortgage crisis, credit crunch, sell-offs, and subsequent global financial catastrophe of 2008.

It was less a crisis than a slow-motion tumult—one so strong and destructive that even 10 years later, it remains a daily frame of reference. Of course, its wide-ranging impacts, both within and beyond institutional finance, are still being felt, too. But the series of events in 2008, their confused aftermath and the interregnum leading to today’s more stable markets left an indelible mark on technology—even if you put the years of Dodd-Frank drama that followed completely to one side.

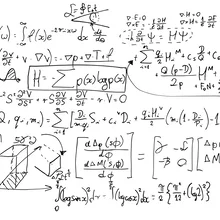

Take a cinematic example from Margin Call, which stylized the lead up to the October sell-off. In an early scene, a young quant uses scenario analysis from his recently-fired boss to model the value-at-risk across their firm’s asset-backed securities desk. The results, unsurprisingly, aren’t good and send into motion the rest of the film. But the greater surprise? The models are all on a USB stick. Chances are today, this back-testing would sit on a sophisticated cross-functional platform, spun-up on hosted infrastructure, and—regulators may not want to hear this—be analyzing credit valuation adjustments and even more exotic methods deployed by traders to lever up. In short, the crisis institutionalized risk technology. After the downfall of Bear Stearns and Lehman Brothers, and massive losses everywhere else, there wasn’t much of a choice.

“Post-crisis regulation reinforced several trends already underway in fixed income: concentration of market share amongst the top of the primary dealers; de-emphasis of proprietary risk-taking in favor of increasing electronic market making; and reductions in balance sheet allocated to “commodity” business lines,” explains OpenDoor Trading’s Susan Estes. “Absent a shift in market structure, it was understood that the fixed income world will continue to be split into the haves and have-nots. In this sense, OpenDoor is the largest macro bet I’ve ever placed. The event exposed a tipping point, one demonstrating that the market is ready for a new trading paradigm.”

Equally interesting is what happened to the technologists at Lehman and Bear, themselves. Judging by these firms alone, the crisis was the largest redistribution of fintech talent in history (and it probably is still so, even if some would argue an even greater shift is subtly afoot today). Indeed, the number of chief technology and information officers in Waters’ pages with background at one of these once-heralded firms—even in this year alone—is astounding. And it is no coincidence: Lehman and Bear were responsible for much of the financial engineering that built contemporary markets, and great tech talent came of age in those fast times.

Senior executives, many of those C-levels, had the foresight to see an opening to do the same thing on the buy side. The attraction was twofold: first, investment managers would be looking to build operational and informational autonomy from their sell-side partners; second, that post-crisis constraints on banks would create the space for more buy-side-driven shadow-banking activities and commoditized execution services. Both, it turns out, have proven true.

In a way, this cohort represent the phoenix of the crisis story—though one whose ending they will surely hope to avoid repeating.

Electronification: Welcome to the Revolution

Electronic trading is Waters’ bread and butter—and that is an understatement. Without evolving electronification of the markets, market data would still be punched on ticker tape; there would be no direct market access (DMA) for the buy side; and brokers would still be placing orders from ‘dumb’ terminals and clunky turrets that look more like they belong in the original Star Wars trilogy. Sure, the phone is still plenty useful; we can all save a little nostalgia for exchange traders breathlessly bellowing into the last open outcry pits, as well, even if price formation is arguably better served by other means. But it’s simple: today, billions are spent every year on what you see on screen, and how to get your order filled before everyone else.

Outcomes remain uneven. On one hand, the last 25 years have witnessed an ever-more creative race to zero latency in equities, equity options, futures and to some extent foreign exchange. Deploying everything from field programmable gate arrays to microwaves to hot-air balloons in the drive to shave off microseconds, this race has also seen some of the most expensive per-square-foot real estate in the world found on northern New Jersey swampland inside exchange operators’ data centers, or suburbs that cling to the Chicago metropolitan area by their fingernails. The veritable cottage industry of high-frequency traders does not lack for juicy stories and entertainment that is innovative, bizarre, stubborn and at times even preposterous. But in its quest, it has also served to push proprietary trading really into a realm all of its own—with black boxes so fast, and algorithms so good, that slower flow doesn’t much stand a chance. Even the modern speed demons of fiber-optic cables are finding an upper limit to their endeavors, as shops close up or become absorbed by rivals across the world.

Meanwhile, the state of play in credit and fixed income remains broadly at the opposite extreme. In these markets electronification and price transparency have been slow to arrive—and for more illiquid instruments, they haven’t yet at all. Not for a lack of trying. From Treasurys to corporates to vanilla interest-rate swaps, a handful of electronic platforms—especially those affiliated with or owned outright by the institutional buy side—have made strides forward. But many of these offer request-for-quote and auction trading styles that fall short of genuine central limit order book liquidity and transparency. Similarly, many an ill-fated data provider has tried, and often failed, at real-time pricing in these markets, as well.

Call it a history of fits and starts. “Electronification of fixed income trading has not yet revolutionized the way asset managers trade,” says Michael O’Brien, head of trading at Eaton Vance. “Electronification over the past few years has largely meant more efficiently replicating traditional phone execution. The future, however, is different and the evidence where we look to is the traction gained by new trading protocols, new non-traditional liquidity providers and new regulations. Automation, artificial intelligence and aggregation are the next steps. The implementation of these new tools will result in significant differentiation in liquidity and execution quality among buy-side trading desks.”

Perhaps the most curious thing is what both groups of asset classes have in common. Even in an era of equities electronification, institutional investors still say they lack actionable, pre-trade transaction-cost analysis (TCA). For multi-leg trades and hedgers, shops are investing with partners to devise execution strategies that act almost as defensive maneuvers. It remains a paradoxical fact of life that the easier a market becomes to trade, the easier it becomes to be picked off. At times, many may sympathize with that old standby trader, slouched in the pit on the Merc’s last day of open outcry.

Electronification is great. But when you lose, the old ways still feel like the best ways—regardless of whether they actually were. As O’Brien puts it, “welcome to the revolution.”

Cloud: Revolution-as-a-Service

For junior Waters reporters of a certain age, it was a running joke. Freshly off being hired, your first news story is inevitably a cloud story; your first headline pun is unavoidably “rainy” or “stormy.” Of course, this was well before Amazon Web Services helped make Jeff Bezos into a $132 billion man and before every IBM commercial featured the phrase “in the cloud,” almost as if it were state-mandated. Ask a handful of curmudgeonly buy siders how much information they’re willing to offload into a public cloud—and you are bound to find a few holdover skeptics today (even among those who invest in cloud providers, themselves). As for everyone else: well, they’re firmly in the sky.

The advantages of scalability and pennies-on-the-dollar cost reduction is where cloud made its fame. Credit where credit is due: most of the push into the cloud-based as-a-service model (SaaS, IaaS, and so forth) in financial services was initially and, even in the face of early opposition, steadfastly vendor-driven. At first, it was also obsessively focused on the hardware side. But to look back on Waters’ coverage of the subject, one could argue that the eventual acceptance of cloud was owed to something more conceptual, in that it wasn’t the economics, alone. In part, this sea change had to do with proving what cloud can do on a computational basis—what it can do better, as well as cheaper. Whether that’s spinning up an advanced proprietary infrastructure that can handle terabyte-sized big data analytics, or a hedge fund-in-a-box offering that helps a new startup get off the ground as soon initial funding is secured, cloud makes finance more competitive.

“The power of emerging technology today can bring immense value to a business, but can only do so with the computational power, storage, and complex analysis that can be provided by the cloud,” says Synechron CEO Faisal Husain. “Another benefit is of course lowering operating expenses and creating more scalability as a larger part of legacy infrastructure modernization and digital transformation initiatives. By going serverless for cloud adoption, institutions can see a shorter time to market, less wastage in time and effort spent, better stability, better management of development and testing, and variable costs based on usage. This agile approach to cloud brings firms closer to their driver of increasing cost pressures and fills the prerequisite for firms to take full advantage of emerging technology, to remain on the cutting-edge of innovation.” Philosophically, few will object to that.

The other flashpoint, more strangely, came in 2012 when Hurricane Sandy directly hit the New York area. The massive storm caused $70 billion in damage; exchange trading had been called off early in its wake. But after Sandy many operations chiefs and technologists took the opportunity to evaluate their business continuity processes (BCP), reorganize them, or bask in the smart choices they had made prior thereto. For those affected who got it right, there was one commonality: besides a little bit of luck, they and their BCP providers were early adopters of the cloud. As the Hudson River washed into bank lobbies—or in the Waters newsroom’s case, the East River—it turned out that cloud providers care a great deal about availability and disaster recovery. And they are good at it. The industry took notice.

Just where does this revolution go in the coming years? While there is always a rejiggered service model to try out or another penny to shave off, in ten years’ time cloud has become conventional wisdom in a space that once, not so long ago, was deathly afraid of storing data outside the four walls—or using a vendor who does the same. Few innovations have proven more influential.

Algos: Kingmakers and Troublemakers

Sidewinder. Work, and Pounce. Sumo. The names of algorithms are an invitation to anthropomorphize them—and ever since 2004, when this “relatively new” concept first began regularly showing up in Waters’ coverage of “cyber-trading,” they have been an unfailing curiosity for the magazine. That curiosity splits two ways. First, there might be no more important sell-side execution offering today than the algo wheel, and no more greater influence on execution across a number of asset classes. Second, algos—even terrific algos—and their makers must suffer the same struggle for survival as human traders. Just ask the owners of those mentioned above: Instinet, Lehman Brothers and Knight Capital.

One could say that algorithms are a natural outgrowth of electronification and low-latency trading—that may be so, but it’s much like building a beautiful Formula One racetrack and letting it sit empty. You still need the souped-up cars. To look across the evolution of algorithmic trading, one finds key patterns and debates that have shaped trading today: norms around different types of trading behaviors that algos should mimic (i.e. Sweeping, Vol, TWAP and VWAP); distinctions between lit and dark trading that have modulated over time; measurement of best execution, including required data; effective block trading—the rise of algos had a part to play in all of these. Over time, they have played a parallel role in killing certain trends, as well—complex-event processing, anyone?

This lead role doesn’t always go so swimmingly for algos, though. And sometimes, the failure is calamitous. The 36-minute long, trillion-dollar Flash Crash in 2010; the disastrous collapse of aforementioned Knight Capital; and numerous smaller glitches all prove that algos are troublemakers, too. The contour of these events speaks to a little-acknowledged fact, in that generally, the majority of algos are well-behaved and not particularly sophisticated, they are blunt instruments, and simply do what they are designed to do. If this, then that, essentially. Unfortunately, it only takes the mere hint of an error in the market (typically born of lazy coding or pre-production testing) and they pile on with cascading effect.

Meanwhile, opacity around the algo business has built up, occasionally punctured to reveal serious questions of execution immediacy and fairness—most recently in broker-owned dark pools. Finally, though regulators have made far more of an effort in recent years, these events have repeatedly exposed just how little grasp market overseers have of algorithmic trading. Exchange and single-stock circuit-breakers have been introduced to curb extreme swings in price, not so much precise tools as temporary stopgaps to nurse the damage when an algo has gone rogue.

For all of the benefits algos have ushered in, and winners they have made, there is still much to learn—and perhaps regulate.

Crossing Over: Hybrid Platforms’ Gambits and Flops

The philosopher Thomas Kuhn famously argued that scientific revolutions emerge from crisis, “inaugurated by a growing sense [sic] that an existing paradigm has ceased to function adequately in the exploration of an aspect of nature to which that paradigm itself had previously led the way.” It is probably natural that fintech systems evolve in the same way. As historical boundaries give way to shifts in functionality and hybridization, the fintech provider community is responding to a collective need. It is sociological, like Kuhn thought, as much as technical.

These shifts don’t happen cleanly, of course, and neither has the emergence of hybrid systems crossovers in recent years. They take on a life of their own. Take the two most obvious: the order and execution management system (OEMS) and the investment book of record (IBOR). For many, an OEMS—which would align order generation, execution algorithms and risk-check analytics, presumably with benefits of speed, workflow efficiency and user experience—is a kind of Holy Grail. The industry has pursued some version of an OEMS for at least a decade, and the closest successes have grown organically from one species or the other, rather than started from scratch. Yet oddly, many in this space don’t shout “OEMS” from the rooftops; in fact over the years there has been a persistent reticence around referring to these systems as crossovers, even if that is plainly their intention. It is a kind of an unspoken rule for a lukewarm revolution—something several doomed startup entrants have learned. As one Waters staff editorial deftly summed it up in 2008, “EMS and OMS convergence? Not so much.”

That mostly remains true ten years later, though as Goldman Sachs CTO Michael Blum says, it is certain to remain an objective as well. “Banks have pushed more towards quantitative fund clients and increasingly sought to bring multi-asset trading platforms to bear,” Blum says. “As a result, we have seen the trend of crossover order and execution management systems merging together, as clients seek to move closer to the point of execution. Some may want risk checks only; others want access to the smart order router; some are analyzing execution down to microseconds while others are less sensitive to slightly-higher latency. They are picking and choosing access points to banks’ liquidity depending on those needs, and so you can’t have the hops from OMS to EMS or other infrastructure. It’s increasingly like high-frequency trading firms, where everything is tightly integrated and more proprietary software being is introduced. Ultimately that should promise more systems convergence going forward.”

IBOR, on the other hand, fully embraced its moment—perhaps to the extreme. Already many years old, the investment book of record arose from the dustbin of history in the post-crisis period as investment managers and institutions sought a single source of truth across portfolio management, accounting and order management functions—a kind of security master on steroids. Providers from each of those spaces took a hearty stab at it, and for a couple years at buy-side events, you couldn’t get away from IBOR if you tried. Eventually, the hullabaloo ebbed, and it left some interesting questions: the IBOR implementation projects we would learn about at Waters were the genuine article—ambitious, years-long gambits with measurable results. But in reality, how many functioning IBORs exist at major pensions and fund managers just a few years later? How much of it was a cheeky ploy to get in a buy side’s tech stack?

The OEMS is a hybrid many could secretly use, but few will vocally embrace. IBOR was openly hyped and found its share of public acceptance, but today has probably left many buyers-in wanting more. Such is life in the clunky business of evolution.

Data Makes the C-Suite

A range of external forces like trade electronification and regulatory pressures have shaped the availability (and limits) of market data and reporting data over the years. But much has changed with respect to the internal organization of data, too. The C-level priority assigned to that data has followed suit; today it has at least three historical layers.

Early on, the new concept of enterprise data management (or EDM) grew out of necessity, as electronification swept up settlement and other post-trade activities, and combined with pre-crisis investment bank mega-mergers to create burgeoning middle-office operations that needed new shape. Besides running a better shop—completing transactions faster and caching more reference data along the way—this environment also began a natural competition for influence among major institutions as standardization initiatives took root, and those still continue to play out today. The bank that could demonstrate its own classification methods best (and then persuade clients and counterparties to adopt them) could make a strong argument for steering the process and molding the industry standard in its image—yielding sizable advantages going forward. EDM began with the enterprise, but turns out it could also serve as a kind of force multiplier.

More recently, data executives have had to cope with post-crisis regulatory requirements—and for that matter, shareholder inquiries—that ask fresh questions of firms’ data, demanding aggregation capabilities and governance frameworks on a wider scale than ever before. That was the initial genesis of the chief data officer (CDO) trend that took hold in the middle part of this decade. After all, informational availability (or lack thereof) had been painfully exposed during the crisis, while reengineering data processes is notoriously tedious and not every CTO or CIO is in the data weeds enough—or, for that matter, had the desire—to take the lead. Call it a CDO or something slightly different, this was a person specializing in bringing order to particular points of chaos. It also included, the first time, many large firms on the buy side that had never experienced these kinds of requirements.

But one of the reasons why the CDO role remains a tumultuous, and fascinating, one is that it almost immediately took on a whole new bent with the explosion of new data sources and data exhaust, technologies to capture and exploit those, and applications built around them. In an unfair twist, today’s high priority upon data usage has tested the frameworks developed by CDOs almost before they are put into place. In 2018 the role still requires the same powers of institutional persuasion and ship-steering as before, but far more emphasis on data science, partnering startups and even borrowing elements from data-forward industries. It’s part master tactician, part magician— a tricky mix many CDOs continue to try and balance. This much is certain: EDM was once a plodding, steady-handed concept. Today, the stakes are raised, and it’s not for the faint of heart.

“Data definition, quality validation and disciplined data management operations are imperative to ongoing success in our industry,” Brian Buzzelli, SVP and head of data governance at Acadian Asset Management, says. “The CDO or head of data management has become one of the most strategic roles to deliver clean, high-quality data to satisfy regulatory precision, manage the complexities and diversity of data, feed our insatiable appetite for near infinite data volumes, and enable our exploration of AI and machine learning as we design new financial strategies hunting alpha. Just like data, the data leadership role requires a multi-faceted set of business and technical skills and now touches every function driving data manufacturing from technical information architecture and operational excellence to commercial alignment, jurisdictional considerations, and innovation.”

Into the Breach

Few developments make technologists pine for the days of stock tickers and settlement by paper certificate. Cyber is surely one of them, and perhaps no trend has spread so quickly in recent years as bulking up the security fences.

Informational security concerns aren’t new, particularly in financial services—where protecting the identities of clients and counterparties has always been historically paramount. To sophisticated thieves, breaking into a bank vault isn’t just about the gold that’s tucked away; the ledgers and documentation could actually be far more valuable. But unlike heists of old that took some time, stress and corporeal guile, today’s hackers move faster, slip through thinner cracks, cast the net out far wider, and flee the scene (or misdirect defenses) in ways that are impossible to completely deter or detect. No one likes to admit it, but this is a constant losing battle. As many chief information security officers (CISOs) have told us, it’s a question of magnitude, risk-rating data protection measures and above all, raising human beings’ own awareness.

Waters first began reporting in earnest on the rise of CISOs in 2013, and like many trends, our attention was earned at an ex-finance inflection point: the Target breach that saw millions of US consumers’ credit card information scraped by malware. That situation ended with a record $18.5 million settlement, which seemed a big number at the time. But it is peanuts compared to enforcement actions seen every year in financial services. Rather, what probably raised more eyebrows among banks and fund managers is how easily a massive, and by all accounts well-secured, company like Target was beaten: by a regional HVAC contractor first becoming compromised. It was a tough reminder than everything even remotely near the network—from a seemingly harmless email link to a sensor on the office printer meant to ping a vendor when the ink is low—is susceptible.

Serious ramifications followed for a financial technology ecosystem that is highly-dispersed and replete with key-point dependencies, right around the time firms were beginning to consider moving more data outside the walls to the cloud. Five years later, the core issues for cyber posture remain essentially unchanged: resource allocation and cascading defenses; using artificial intelligence to up intrusion detection; better education and simulations; and more due diligence on any third parties plugged into the stack.

“It’s satisfying to see the role of CISO elevated today to a senior management business function, as opposed to strictly IT,” remarks Bob Ganim, CISO at Mizuho Americas. “CISOs need to fully understand the business; outside business environment; regulatory landscape; technology used; threat landscape; security controls needed; and the organization’s risk appetite. This is why the role is moving, in many cases, from reporting to the CIO, to reporting to the Chief Risk Officer or others in the C-suite. It is an integral part of the management team. And it’s become a fairly collegial network, with CISOs sharing information.”

Indeed, whatever the headlines or contour of the latest hack, cyber remains in the collective consciousness and isn’t going away. The allure of distributed ledgers and ongoing discussion over data security for the US consolidated audit trail (CAT) project are proof enough that, if anything, it will play a larger role in market mechanics, industry initiatives and technology change going forward. Sure, like their ancestors in the 20th Century, most hackers are rational and have an endgame. But not every cybercriminal is interested in a theft or ransom; some just want to impress themselves and take a system down. For large organizations, a few hours’ disruption alone could cost tens of millions in missed trading and market opportunities, even apart from potential data loss. For smaller ones, it’s no hyperbole to call the threat existential.

“A single firm may not observe a cyber threat pattern,” concludes Ganim. “But together, we can.”

Alt Data: Signals and Chimeras

How much data is too much? Healthy debate remains around that question, but for most of Waters’ history, the tendency has been to lean towards “more is better”. Faster and more reliable data has allowed the investable universe to expand significantly over the last 25 years, from emerging markets and exchange-traded funds (ETFs) to weather derivatives and off-the-run treasuries liquidity. Throughout, the trend has been to call any data beyond traditional market data and trade reference data “alternative”. But what might have been deemed bleeding-edge alt data in the early 1990s is not so much today.

It was not so long ago that trading on trending social media was novel, and data drawn from sensors on natural gas pipelines or satellite imagery of big-box store parking lots was considered new-fangled. In addition to fundamentals and technical factors, today’s stock-pickers are apt to use a mix of these kinds of inputs before taking a position or putting on an option. The same can be said for data delivery: for instance, sentiment scoring and analysis—drawing on natural-language processing (NLP) that can summarize positive or negative feedback from news in milliseconds—has proven an invaluable tool to quickly realize the value in alt data feeds.

Innovation on both ends has helped to bring this information—much of it already out there, if unruly and hard to verify—into the mainstream. When deployed effectively, it can absolutely be a source of edge. “Today the race is on to figure out the most effective way to combine the best aspects of fundamental and systematic investing,” as Balyasny Asset Management’s chief data officer, Carson Boneck, puts it. “It’s an extremely complex problem, and data and technology are the linchpins. We believe that firms that invest in the infrastructure, machinery and emerging technologies to harness data at scale will win.”

In fact, the last 25 years have already taught a few object lessons in alt data, as well. For one thing, frankly, it is far from a sure thing. This data needs context, historicization, and backtesting to be proven out and worthy of portfolio management decisions on its own; even measuring that reliability remains more art and skill than science. Ironically, as more and more of it becomes available, predictive and trustworthy, the alpha also decays, as well. So a sense of tension will frequently exist between actioning this data quickly and being reasonably certain about it. That’s led to a growing graveyard of Twitter-based hedge funds and other gambits that went all in alternative sources, too soon, only to see their secret sauce ultimately fail swiftly and spectacularly. Sometimes alt data is signal; other times chimera.

For another thing, more from the vendor perspective, building and monetizing an alt dataset is far from straightforward, for startups and large investment banks that can glean a ton of it from their retail banking and lending arms alike. Acquiring alt data, running it through the proper analytics for validation or usability, and delivering it while still fresh—this is neither a cheap investment, nor an easy one. At Waters we’ve catalogued dozens of success stories that have done just that with aplomb. But it remains tough to make a business case around a source of information, however unconventional, that is no more indicative than the pricing on a high-yield corporate that has traded twice in the last 60 days.

Still, some proprietary traders out there may view it as useful—it’s about segmenting and packaging the data to the right set of users, or caching it for later, and setting expectations accordingly. As one conference panelist put it earlier this year, you never know who might be able to use it as time goes on. For now, though, experience shows alt data is better regarded (and built) for its potential as a special kind of jet fuel, rather than the jet itself.

Risk: Swimming Among Black Swans

Defined as the possibility of loss or peril, risk seems an inherently bleak topic. And yet in financial services, including across Waters’ pages, it is everywhere. The focus is on defining and measuring that possibility, and being sober and disinterested about it: how often will events trigger a margin call on a derivative instrument; how many times and why will a collateralized loan obligation trade break; might an upcoming election result tank its country’s currency (and the debt dominated in it); how to gird against illiquidity or contagion in a given market? From the minutiae of trade settlement up to drivers of default and full-blown crisis, all of these different risk flavors have a part to play in today’s enterprise, and they are to say nothing of today’s standard for financial performance, itself: risk-adjusted return on capital.

Ten years on, the residue of 2008 lives on at many institutions, and in financial technology. It would be a fallacy—or in the very least, incomplete—to say that risk managers were asleep on the job in the lead up to the crisis. Rather, that tumultuous period exposed how little they were empowered institutionally, how poorly data was shared across desks, and perhaps to some extent, a lack of the technical capacity required to be creative about evaluating crisis scenarios and extreme, so-called “black swan” events. While far from perfect, today’s risk management function has seen renewed emphasis and investment in each of these areas, and much of that is driven by improvements in technology.

Once seen as ancillary, chief risk officers (CROs) are now plugged in and running their own teams, interfacing with portfolio managers and the CIO on the buy side, and sitting as equals in sell-side boardrooms. The platforms risk data sits on are increasingly agnostic to coding language and architectural orientation, allowing new risk protocols to be developed and delivered more quickly, and aligning current risk metrics across desks in real time. Finally, recent leaps in artificial intelligence (AI) like deep-learning techniques allow firms to examine new paradigms beyond value at risk (VaR), and test pricing methodologies that would have never been imagined a decade earlier. Perhaps apart from trading, few areas have seen the kind of comprehensive forward momentum risk tech has attracted, and rightfully so.

The question is whether it can keep up. As we move further into a new era, and the more automated investment activities become over time, the more likely a new front—tech risk—begins to really open up, too. On Waters’ fiftieth anniversary, the refrain will likely go that beta is calculated and baked into everything an investor or bank does, without any human eyes. Trading by instinct and elective portfolio rebalancing will be a thing of the past—and that can be a great thing. For some investment giants like BlackRock, it increasingly already is. “Artificial Intelligence allows us to ask questions that humans never imagined asking before,” explains Jeff Shen, the firm’s Co-CIO of Active Equity and Co-head of Systematic Active Equity. “Historically, fundamental investing focused on developing a deep understanding of individual companies, while quantitative investing sought a baseline understanding across several companies. With artificial intelligence, it is now increasingly possible to see both breadth and depth – taking you from a view of the forest to a view of an individual tree.”

And yet conversely, the possibilities rise that a future calamitous market event could be sparked—or made considerably worse—by this same technology simply doing its job. Then, the concerns will be the same as they’ve always been, down to quantification and possibility: just how unforeseeable was the event, and was the industry collectively prepared and willing to manage it without collapse?

Fintech(s): New Look, Old Objectives?

In the life of a financial journalist, few things prove more curious than a dramatic change in grammar. One of those hit Waters when, sometime in 2015, the portmanteau “fintech” became an officially accepted and trendy noun for a startup company… working in fintech. Just how that particular rhetorical choice happened, no one quite knows. But as for the reasons why a startup culture—and fintechs—finally arrived to the capital markets? Those are more explainable.

Financial services has long been an obvious mark for Silicon Valley-style disruption, following in the wake of numerous other industries with incumbents perceived as slow and analog. This began with consumer banking, in peer-to-peer payment apps and early adoption of cryptocurrency technologies, but over time it has certainly expanded to more core functions in the investment and trading lifecycles. Meanwhile investment banks, in particular, refused to accept their stodgy reputation as recruiting top tech talent rapidly became more difficult. They opened up funding for incubators and challenge competitions not unlike those that have flourished in northern California and London’s Hoxton—with the added benefit that these giants could keep their finger on the pulse and, if necessary, buy up the emerging technology that has resulted. Legacy vendors have followed suit—in messaging and spirit, anyway, if not always in changing tech and services.

On those cultural measures alone, fintechs have already had a significant influence on the industry—spiking the level of venture capital flowing into the space, and eventually the initial coin offerings (ICOs) too, for better or worse. As a result, these startups also come with different DNA, and different options, than their garage-born predecessors. They have guidance and often funding from those who were there the first time, though not exactly greenfield in front of them.

A few unicorns have come through along the way. But as for their broader results, the fintechs’ progress thus far been uneven—Citi and Wells Fargo haven’t exactly gone the way of Blockbuster and Sears. And that’s okay. There are obvious positives: asset managers deploying technology originally built for robo-advisors elsewhere in their operations is one example; solving certain complex post-trade calculations, like adoption of new portfolio compression tools, another. And the real potential of artificial intelligence and distributed ledgers is finally catching up with their respective hype cycles. In a few short years, the story could be very different.

Still, in 2018 it is fair to say that fintechs for capital markets are, on whole, still surviving their early days. After the space really heated up, things have steadied. For aspiring technologists and entrepreneurs alike, it’s an interesting moment to make a plan, or to make an exit: do you want to be a PayPal, or an Advent Software, or a Bloomberg? The former gained its founders fame; the next simply became famously good, and we all know what became of the last guy and his terminal. Either way, there’s no shortage of old problems to solve. And thankfully, those problems have a newish—some might even say sexy—label.

“Today, innovation is a constant force,” says Blackstone Group CTO William Murphy. “The world is moving so fast and becoming so complicated that the only way to stay safe and current is to embrace systems throughout the firm’s workflow. Even the old school parts of financial services firms are being transformed by fintech startups or disruptive technologies developed internally, pushing the boundaries on the change the business can handle. The talent we all need is the ability to be entrepreneurial, agile and creative—able to take on new information rapidly and create new products to add value for clients faster than ever before.”

Lust for Ledgers

In an October 2014 dispatch from Sibos, Waters’ audience was introduced to a strange-sounding database technology, while recapping a presentation from a then 20-year old speaker who decidedly did not fit in with the typical conference fare: Vitalik Buterin, the co-founder of Ethereum. In the roughly four years since, well over 600 articles have come and gone on the subject of blockchain.

After an early and fitful period of detaching from the stigmas of bitcoin (which has gotten its own, even more belated rehabilitation since then) distributed ledgers may well prove be the biggest game-changers in Waters’ first 25 years. They have brought out superlatives from many corners of the industry that have, at times, bordered on the Messianic. But for the magazine, what makes the blockchain story so compelling is its drama: those who know it best see the long game ahead, and dangers, rather than the cure-all others might have you believe. It’s not unlike the initial releases of iOS and Android in 2007 and 2008: it was clear they would forever augment how we interact with each other, access information and engineer new behaviors. But it was unclear just when, and unclear whether that would be universally for the better over time. (Did you just check your phone?)

As for ledgers, today they are probably hovering around the iPhone 2. The benefits are clear; everyone wants one and they are making our old technology look, well, ancient. But your friend has one—not your whole family. They’re mostly still sloshing around the ecosystem with limited user bases and applications, like trade finance or private chains for OTC instruments that seldom trade and settle even more slowly, like securitized debt. Much remains to be hashed out; not just the kinks. Efficient performance for faster-moving, regulated public markets; mutability and chain augmentation to address data errors; interoperability among the handful of chains that have gained traction; data sovereignty issues and, of course, liability and recourse in case of failure—these are just some of the key points to solve for. That’s led some to argue that the biggest beneficiaries of blockchain adoption in the short term may not be market participants, at all—but rather their lawyers.

Yet the knock-on benefits are already present, too. As one blockchain team lead at a tier-one bank recently put it, the noticeable change is in institutional thinking and collaboration—carving out the right fits, and getting prepared. Indeed, her role is to promulgate the bank’s blockchain, she said, but the real goal is unlocking communication: meeting with the business, hashing out their needs, and determining what the right solution should like. Sometimes, a blockchain makes little sense, she added. Because distributed ledgers are append-only and push much of the counterparty identification work forward, the same goes for market infrastructure providers and technology incumbents who must work together in getting more data processing done faster ahead of blockchain-based settlement and reconciliation. In reaching an “adapt or die” moment, many are now at least taking a shot at the former, rather than risk going the way of the Blackberry.

Those twin indicators—a kind of sobriety about the limits of ledgers, and at the same time an acknowledgment that they’re no passing fad—may be the strongest proof so far of the industry’s blockchain maturity and readiness for the technology. Now comes the hard part. “Indeed, blockchain, distributed ledgers and smart contracts have attracted the attention of financial institutions since 2014,” says Dr. Lee Braine, a ledgers expert within Barclays’ Investment CTO office. “So far, the industry has experimented with these innovative technologies to explore how they could help solve actual business problems. Groups of banks have partnered with fintech companies that bring both novel technologies and more dynamic ways of working. Collaboration has been a hallmark, with peers exploring potential common utility technologies. The goals include simplifying existing processes, rationalising infrastructure, and ultimately reducing cost. With trade associations such as ISDA looking to provide derivatives blockchain standards, and market infrastructures such as DTCC and CLS looking to deploy industry blockchain solutions, we may soon witness blockchain’s coming of age in financial markets.”

September 11, 2001: A Day We Will Never Forget

It seems like yesterday that the world changed forever at 8.46 AM on that crystal-clear Tuesday morning in downtown Manhattan.

The Waters staff have, naturally, discussed the events of that day, given Waters’ poignant association with September 11, even though our youngest staff member hadn’t even started high school at the time. But the families of the 16 Risk Waters staff members who lost their lives that day, will find little consolation in the knowledge that, as a group, we have spoken about their loved ones, and, while we might not know them, they have been in our thoughts in the lead up to every anniversary of the day their lives were turned upside down.

On early Thursday morning on the 10th anniversary of the attacks, as I was readying myself to leave the house and make my way to work, I briefly watched a BBC interview with Margaret (Maggie) Owen, the mother of Melanie de Vere, one of the Risk Waters “16”. For me, the interview “particularized” for the first time in years the emotional devastation of those caught up in the attacks, poignantly conveyed by a mother longing for her daughter, about whom people still talk.

In the days and weeks following “that Tuesday,” resolutions were made and “bucket lists” compiled, but as is so often the case after an emotionally charged episode, we find it all too easy to slip back into normality, fixating on the trivialities of the day-to-day grind, blissfully ignorant of the bigger picture, revealed fleetingly during those flashes of intense, yet infrequent, introspection.

Perhaps now, on the anniversary of that dreadful day, it is time to revisit those resolutions and actually eat with the good cutlery rather than just talking about it? Perhaps now is the time to follow through on those plans to climb that mountain, to serve in that soup kitchen during the festive season, and to enhance those relationships with our significant others?

From all of us at Waters, to all of you: We’re thinking of you at this time.

Victor Anderson, editor-in-chief.

We remember our friends and colleagues who lost their lives in the World Trade Center on September 11, 2001.

Sarah Ali Escarcega

Freelance Marketing Consultant

Oliver Bennett

Staff Writer, Risk

Paul Bristow

Senior Conference Producer

Neil Cudmore

Sales Director, Waters

Melanie de Vere

Publisher, Waters Reference Products

Michele du Berry

Director of Conferences

Elisa Ferraina

Senior Conference Sponsorship Coordinator

Amy Lamonsoff

Conference Coordinator, North America

Sarah Prothero

Conference Operations Manager

David Rivers

Editorial Director, New York

Laura Rockefeller

Freelance Delegate Coordinator

Karlie Rogers

Divisional Sponsorship Manager

Simon Turner

Board Director

Celeste Victoria

Conference Telesales Executive

Joanna Vidal

Events Coordinator

Dinah Webster

Head of North American Sales

We also remember our 65 colleagues from the industry who attended the Waters conference in the World Trade Center as delegates, speakers, sponsors and exhibitors.

Only users who have a paid subscription or are part of a corporate subscription are able to print or copy content.

To access these options, along with all other subscription benefits, please contact info@waterstechnology.com or view our subscription options here: https://subscriptions.waterstechnology.com/subscribe

You are currently unable to print this content. Please contact info@waterstechnology.com to find out more.

You are currently unable to copy this content. Please contact info@waterstechnology.com to find out more.

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@waterstechnology.com

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@waterstechnology.com

More on Emerging Technologies

Blackstone partners with Google, BBH and Citi enhance API connectivity, and more

The Waters Cooler: A recap of the major tech and data news from the past week in the capital markets.

Waters Wavelength Podcast Ep. 352: Agentic workflows, AI bootcamps, regulation, and faves from Seoul

This week, Tony and Shen chat about some recent stories.

Old data practices key to navigating new agentic ambitions

Metadata and data quality are not as sexy as autonomous agents, but data executives across the capital markets warn that they are integral to successful agents.

EU AI Act leaves agents in regulatory limbo

A new paper published by AI ethicists draws attention to a hole in the EU AI Act surrounding high-risk agentic systems.

CME to launch compute futures, agentic AI for capital calls, and more

The Waters Cooler: A recap of the major tech and data news from the past week in the capital markets.

APAC’s hidden opportunity is in the hands of wealth managers

Asia-Pacific’s financial firms have lofty growth ambitions that will come with high cost and complexity. To succeed, they’ll need a quality portfolio toolkit and a connected technology architecture, writes BlackRock’s James Verner.

FactSet’s vectorization service aims to improve agent accuracy

FactSet chief AI officer Kate Stepp discusses the importance of having AI-ready data in the agentic era.

DeFi and TradFi firms are borrowing each other’s benefits

The Waters Wrap: As blockchain tech gains a small foothold in market data, Nyela says the thing separating blockchain’s previous craze and its second wind is choice.